Sleeper is a new set of Features for nodenogg.in allowing the node text that is gathered by a team to be compared with a reading list, a small set of data and provide possible ways for the team of students, researchers, and team to consider new avenues of idea generation and ideation. The idea is to allow your words as a team to;

EXPLORE, REVEAL and EXPERIMENT with the CORPUS (reading list)

The original version of this idea came out of my PhD and the rough draft can be read on Manifold followed by the funded application too on Manifold. The pilot funding has been provided by the Web Science Institute (WSI) to support creating the SleepeR proof of concept. I then have to look to apply for further funding when I report back on the pilot’s outcome to the WSI in the summer of 2023.

So, I have assembled a small team of interested and useful individuals, Lesia Tkacz, Ash Ravi and Maddie Dwyter, who are helping to make and test this proof of concept.

Our roles are;

Leads

Adam Procter: Project Lead, this means I am responsible for the project and leading the ideas and also working directly with students/staff in nodenogg.in

Lesia Tkacz: Product Lead, Lesia brings prior experience of Natural Language Processing (NLP) and “old school” Artificial Intelligence (AI) to the project and able to translate the ideas I have into words that the technical team can muse on.

Technical Team

Ash Ravi : NLP Research Engineer, Chief builder of backend python stuff. Ash is making the Sleeper features in python for us to experiment with the Corpus.

Maddie Dwyer : Human Interface Designer, Maddie will be working with Adam to imagine and code some interface elements to bring the python terminal stuff to life inside nodenogg.in itself via extending the nodenogg.in views to include new SleepeR views and interface elements.

The project team are using our internal Slack and not our discord for now as we are all in this tool more often daily, but the broader discussion and output will be published and made open via on thus blogs, the report document and likely some things into discord alongside my Obsidian notes on Gitlab.

The code is also all being published openly on GNU AFFERO GENERAL PUBLIC LICENSE Version 3 as are Obsidian notes that I am taking randomly as the project progresses. This is all in a newGitlab Group.

Part 1

In the first week, Lesia helped to extract the selected texts we had gathered to support the games design and art TOY project into the first mini reading list as readable txt files, we could use this as our initial CORPUS. We also agreed some internal terms to help organise ourselves.

CORPUS = texts, journals, and books from the “reading list”

DOCUMENT = the selected text from the CORPUS

KEYWORDS = words extracted from the JSON file from each team’s microcosm nodes

EXTRACT= span of text in the document that contains the keywords

For our first CORPUS we brought in was these initial set of texts

- Excerpt from Roland Barthes from Mythologies on Toys

- Reay, E. (2022) ‘Immateriality and Immortality: Digital Toys in Videogames’, Playful Materialities

- Giddings, Seth (2019) ‘Toying with the singularity: AI, automata and imagination in play with robots and virtual pets’, in Giovanna Mascheroni & Donell Holloway (eds) The Internet of Toys: practices, affordances and the political economy of children’s smart play. Palgrave Macmillan.

- Heljakka, K. (2017) ‘Toy fandom, adulthood, and the ludic age: creative material culture as play’, Fandom, Second Edition: Identities and Communities in a Mediated World, edited by Jonathan Gray, Cornel Sandvoss and C. Lee Harrington, New York, USA: New York University Press, 2017, pp. 91-106.

- Blasdel, A. (Nov. 2022), ‘They want toys to get their children in to Harvard’: have we been getting playthings all wrong?’, The Guardian, https://www.theguardian.com/lifeandstyle/2022/nov/24/have-toys-got-too-brainy-how-playthings-became-teaching-aids-young-children

Lesia then used an existing programme called AntConc to test some ideas on how we might take keywords and do a compare with the CORPUS. The overall suggestion was to start simple and start with TF-IDF (term frequency-inverse document frequency) to compare keywords from nodenogg.in microcosms with the CORPUS.

Part 2

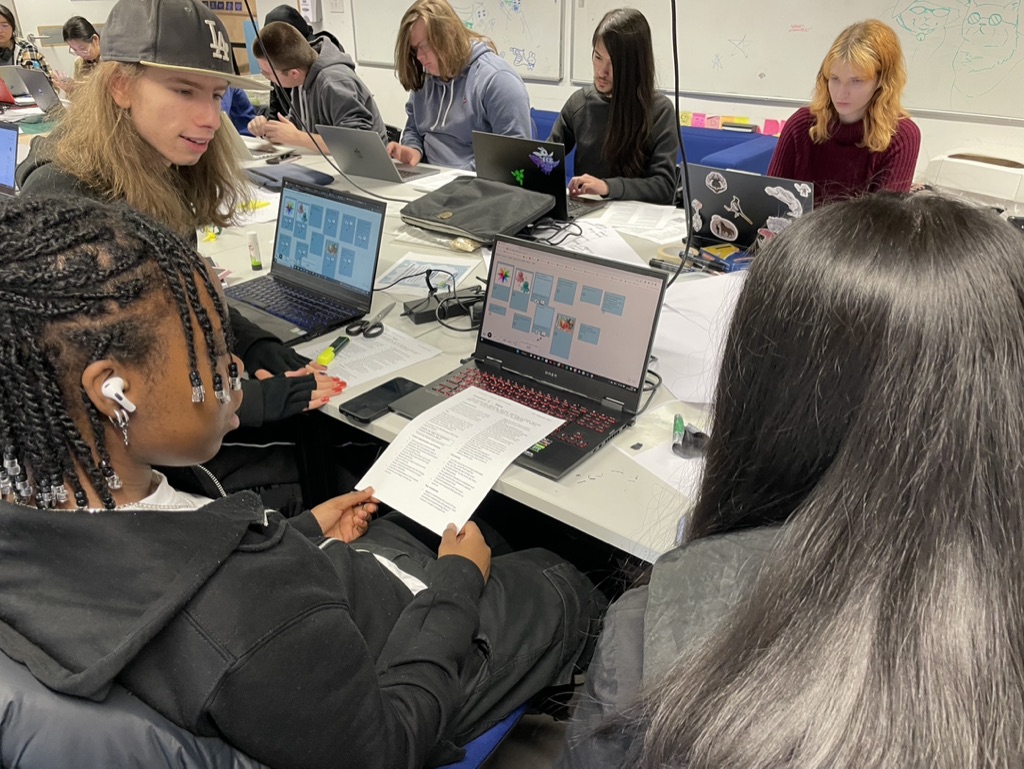

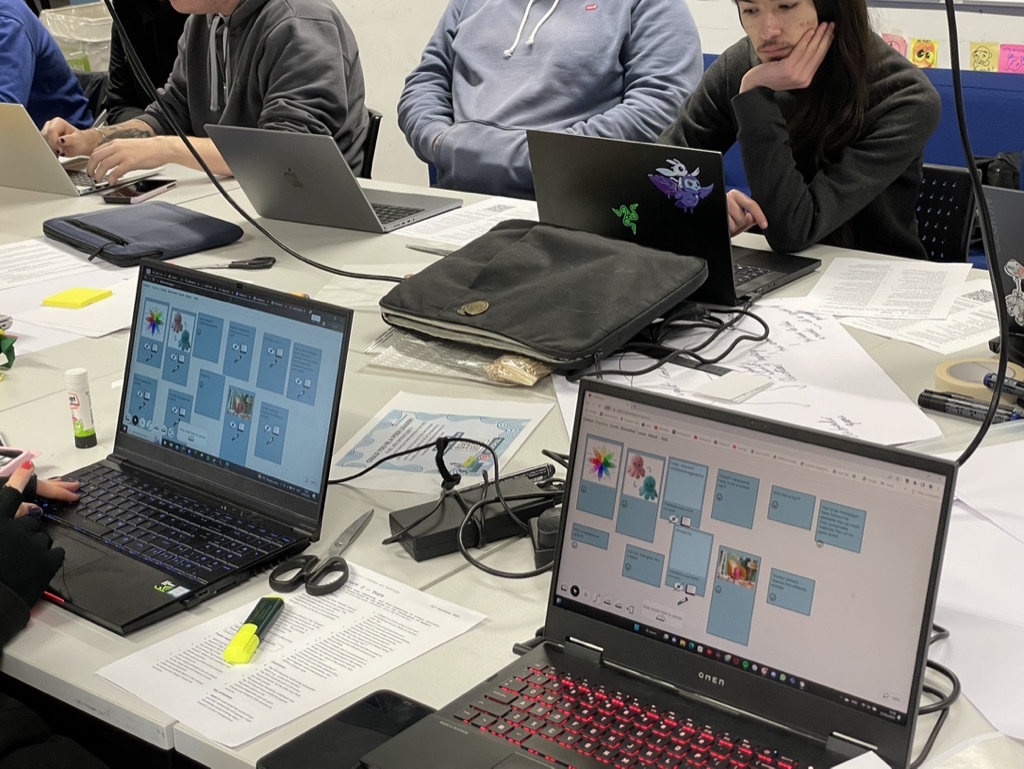

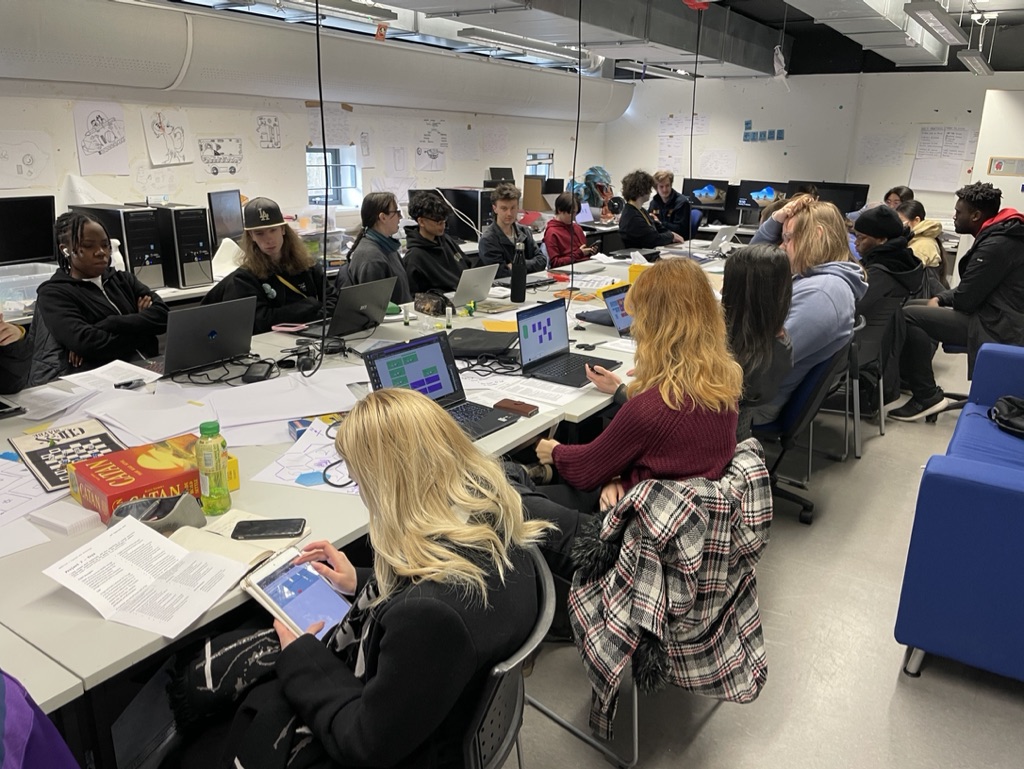

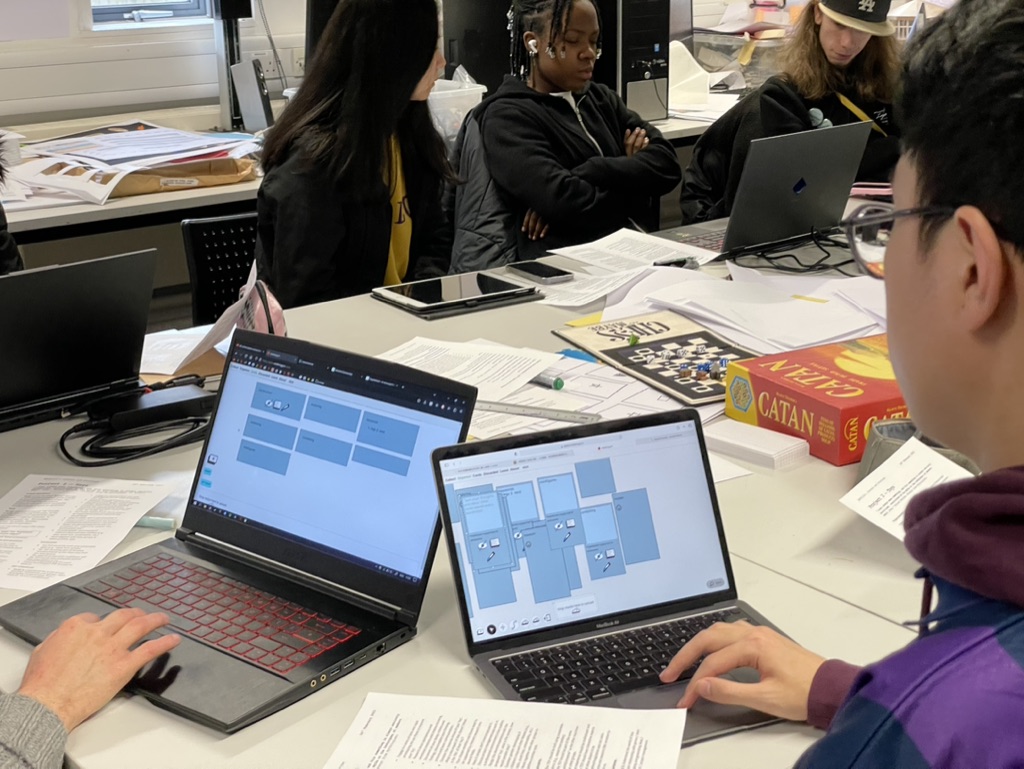

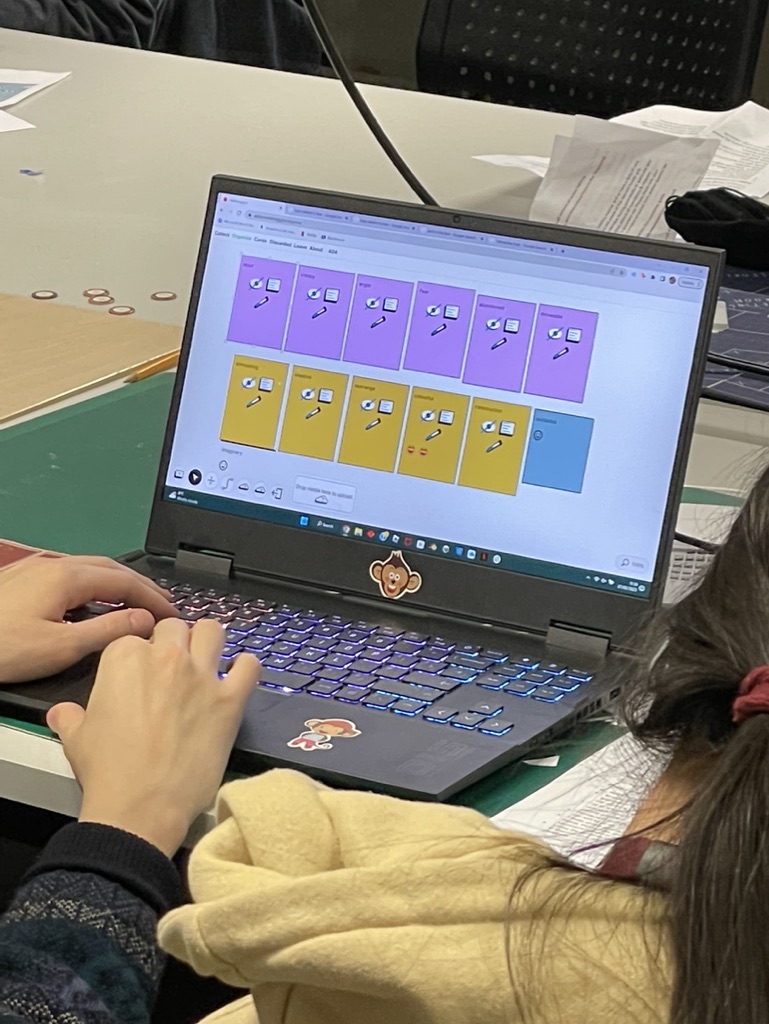

Next, I was set to gather some real keyword data. So, in the game’s studio, I explained the overall concept of nodenogg.in to year 1 Games Design & Art students and the concept around how the new SleepeR feature would recommend readings from the CORPUS. Each student was then placed into their teams after the field trip to Legoland. Each team was to work collectively on idea gathering, and would use nodenogg.in and its Collect view to gather thoughts. I ran three sessions in that one day.

These exercises using nodenogg.in included;

Exercise 1: create single nodes with keywords, as many keywords they could each think of per team specifically on the Legoland field trip.

Exercise 2: write together in nodenogg.in nodes thoughts and ideas on the 5 emotions chosen for the thematic under pinning of TOY. Sadness, Joy, Anger, Fear, Expectation, Surprise, Acceptance, Disgust

Exercise 3: use nodenogg.in to think out loud and gather general ideas and visuals related to Toys, Craft and Textiles, one member of the team had to visit the library and bring back physical items.

While this was underway, Ash was making the concept as outlined already into a small Python programme. The programme would take the keywords (JSON) from exercise 1 in the studios within nodenogg.in, and use the CORPUS to do a TF-IDF lookup and provide each team with the “top” DOCUMENT they should read.

Here are the results from each team with the 5 keywords inside their microcosms, the scoring that was applied to each word and which was the top document they should read.

toy9.json

` scores`

`storytelling 0.415010`

`combining 0.415010`

`land 0.207505`

`inside 0.207505`

`message 0.207505`

`'guardian_article_they_want_toys_to_get_their_children_into_harvard.txt'`

`toy10.json` scores`

`welcome 0.367053`

`dreamer 0.367053`

`audience 0.367053`

`player 0.296136`

`education 0.296136`

`'Reay Digital Toys.txt'`

toy11.json`

scores`

welcome 0.311668`

themed 0.311668`

curiosity 0.311668`

whimsical 0.311668`

dreamer 0.311668`

'Reay Digital Toys.txt'`

`toy12.json`

` scores`

`welcome 0.562550`

`system 0.376746`

`created 0.376746`

`color 0.281275`

`creepy 0.281275`

`'giddings_Toying-with-the-Singularity.txt'`

toy13.json`

scores`

shopping 0.368065`

welcome 0.368065`

realworld 0.368065`

educational 0.296953`

holiday 0.296953`

'guardian_article_they_want_toys_to_get_their_children_into_harvard.txt'`

toy15.json`

` scores`

`stimulation 0.369146`

`welcome 0.369146`

`dragon 0.297824`

`build 0.297824`

`lego 0.247221`

`'giddings_Toying-with-the-Singularity.txt'`

` scores`

`lego 0.596953`

`shop 0.297119`

`creativity 0.198984`

`park 0.148560`

`rest 0.148560`

`'guardian_article_they_want_toys_to_get_their_children_into_harvard.txt'`

After the teams had all had their top DOCUMENTS returned, we did a small survey and of all students who responded they said most had not read any of the DOCUMENTS in the CORPUS and that all were now more likely to read the “top” DOCUMENT now.

Part 3

Next we discussed what make this more useful, and to perhaps provide a view into the CORPUS maybe taking the “top” DOCUMENT and providing detail such as an EXTRACT.

We also tested added to our CORPUS two more DOCUMENTS from the broader reading list. To see what impacts that may have on the data.

- Rules of play: game design fundamentals

- The art of game design: a book of lenses

We didn’t have a chance to return this data response to the students, but it may also be worth the idea of using the same data from nodenogg.in against competing CORPUS sets to push and pull project ideas between, Game Theory/practice and other external concepts from 2 CORPUS banks.

Key takeaways and next steps

Even with the simple recommendation of one text for each team to focus on, students suggested there were more likely to read the provided material. Which proved that they didn’t read the material even though it had been provided well in advance of the project starting, and this simple action activated the readings.

- Pull out paragraph data and present that alongside top